TF-Notes: Difference between revisions

SkyPanther (talk | contribs) mNo edit summary |

SkyPanther (talk | contribs) mNo edit summary |

||

| Line 30: | Line 30: | ||

The success of deep learning is due to advancements in the field, the availability of large datasets, and powerful computation. | The success of deep learning is due to advancements in the field, the availability of large datasets, and powerful computation. | ||

== CNNs vs. Traditional Neural Networks: == | == CNNs (supervised tasks) vs. Traditional Neural Networks: == | ||

* CNNs are considered deep neural networks. They are specialized architectures designed for tasks involving grid-like data, such as images and videos. Unlike traditional fully connected networks, CNNs utilize '''convolutions''' to detect spatial hierarchies in data. This enables them to efficiently capture patterns and relationships, making them better suited for image and visual data processing. '''Key Features of CNNs:''' | * CNNs are considered deep neural networks. They are specialized architectures designed for tasks involving grid-like data, such as images and videos. Unlike traditional fully connected networks, CNNs utilize '''convolutions''' to detect spatial hierarchies in data. This enables them to efficiently capture patterns and relationships, making them better suited for image and visual data processing. '''Key Features of CNNs:''' | ||

| Line 72: | Line 72: | ||

model.add(Dense(num_classes, activation='softmax')) | model.add(Dense(num_classes, activation='softmax')) | ||

== Recurrent Neural Networks == | == Recurrent Neural Networks (supervised tasks) == | ||

* '''Recurrent Neural Networks (RNNs)''' are a class of neural networks specifically designed to handle '''sequential data'''. They have '''loops''' that allow information to persist, meaning they don't just take the current input at each time step but also consider the '''output (or hidden state)''' from the previous time step. This enables them to process data where the order and context of inputs matter, such as time series, text, or audio. | * '''Recurrent Neural Networks (RNNs)''' are a class of neural networks specifically designed to handle '''sequential data'''. They have '''loops''' that allow information to persist, meaning they don't just take the current input at each time step but also consider the '''output (or hidden state)''' from the previous time step. This enables them to process data where the order and context of inputs matter, such as time series, text, or audio. | ||

| Line 138: | Line 138: | ||

** They require careful hyperparameter tuning (e.g., learning rate, number of layers, units per layer). | ** They require careful hyperparameter tuning (e.g., learning rate, number of layers, units per layer). | ||

== Autoencoders == | == Autoencoders (unsupervised learning) == | ||

[[File:Autoencoder.png|thumb]] | [[File:Autoencoder.png|thumb]] | ||

'''Autoencoding''' is a type of '''data compression algorithm''' where the '''compression (encoding)''' and '''decompression (decoding)''' functions are '''learned automatically''' from the data, typically using neural networks. | '''Autoencoding''' is a type of '''data compression algorithm''' where the '''compression (encoding)''' and '''decompression (decoding)''' functions are '''learned automatically''' from the data, typically using neural networks. | ||

Revision as of 01:16, 23 January 2025

Shallow Neural Networks v.s. Deep Neural Networks

Shallow Neural Networks:

- Consist of one hidden layer (occasionally two, but rarely more).

- Typically take inputs as feature vectors, where preprocessing transforms raw data into structured inputs.

Deep Neural Networks:

- Consist of three or more hidden layers.

- Handle larger numbers of neurons per layer depending on model design.

- Can process raw, high-dimensional data such as images, text, and audio directly, often using specialized architectures like Convolutional Neural Networks (CNNs) for images or Recurrent Neural Networks (RNNs) for sequences.

Why deep learning took off: Advancements in the field

- ReLU Activation Function:

- Solves the vanishing gradient problem, enabling the training of much deeper networks.

- Availability of More Data:

- Deep learning benefits from large datasets which have become accessible due to the growth of the internet, digital media, and data collection tools.

- Increased Computational Power:

- GPUs and specialized hardware (e.g., TPUs) allow faster training of deep models.

- What once took days or weeks can now be trained in hours or days.

- Algorithmic Innovations:

- Advancements such as batch normalization, dropout, and better weight initialization have improved the stability and efficiency of training.

- Plateau of Conventional Machine Learning Algorithms:

- Traditional algorithms often fail to improve after a certain data or model complexity threshold.

- Deep learning continues to scale with data size, improving performance as more data becomes available.

In short:

The success of deep learning is due to advancements in the field, the availability of large datasets, and powerful computation.

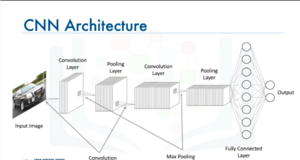

CNNs (supervised tasks) vs. Traditional Neural Networks:

- CNNs are considered deep neural networks. They are specialized architectures designed for tasks involving grid-like data, such as images and videos. Unlike traditional fully connected networks, CNNs utilize convolutions to detect spatial hierarchies in data. This enables them to efficiently capture patterns and relationships, making them better suited for image and visual data processing. Key Features of CNNs:

- Input as Images: CNNs directly take images as input, which allows them to process raw pixel data without extensive manual feature engineering.

- Efficiency in Training: By leveraging properties such as local receptive fields, parameter sharing, and spatial hierarchies, CNNs make the training process computationally efficient compared to fully connected networks.

- Applications: CNNs excel at solving problems in image recognition, object detection, segmentation, and other computer vision tasks.

Convolutional Layers and ReLU

- Convolutional Layers apply a filter (kernel) over the input data to extract features such as edges, textures, or patterns.

- After the convolution operation, the resulting feature map undergoes a non-linear activation function, commonly ReLU (Rectified Linear Unit).

Pooling Layers

Pooling layers are used to down-sample the feature map, reducing its size while retaining important features.

Why Use Pooling?

- Reduces dimensionality, decreasing computation and preventing overfitting.

- Keeps the most significant information from the feature map.

- Increases the spatial invariance of the network (i.e., the ability to recognize patterns regardless of location).

Keras Code

model = Sequential()

input_shape = (128, 128, 3)

model.add(Conv2D(16, kernel_size = (2, 2), strides=(1,1), activation='relu', input_shape= input_shape))

model.add(MaxPooling2D(pool_size=(2,2), strides=(2,2)))

model.add(Conv2D(32, kernel_size = (2, 2), strides=(1,1), activation='relu'))

model.add(MaxPooling2D(pool_size=(2,2)))

model.add(Flatten())

model.add(Dense(100, activation='relu'))

model.add(Dense(num_classes, activation='softmax'))

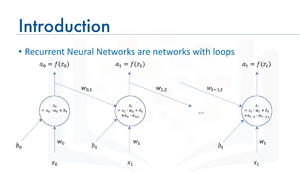

Recurrent Neural Networks (supervised tasks)

- Recurrent Neural Networks (RNNs) are a class of neural networks specifically designed to handle sequential data. They have loops that allow information to persist, meaning they don't just take the current input at each time step but also consider the output (or hidden state) from the previous time step. This enables them to process data where the order and context of inputs matter, such as time series, text, or audio.

How RNNs Work:

- Sequential Data Processing:

- At each time step t, the network takes:

- The current input xt,

- A bias bt,

- And the hidden state (output) from the previous time step, at−1.

- These are combined to compute the current hidden state at.

- At each time step t, the network takes:

- Recurrent Connection:

- The feedback loop, as shown in the diagram, allows information to persist across time steps. This is the "memory" mechanism of RNNs.

- The connection between at−1 (previous state) and zt (current computation) captures temporal dependencies in sequential data.

- Activation Function:

- The raw output zt passes through a non-linear activation function f (commonly tanh or ReLU) to compute at, the current output or hidden state.

Long Short-Term Memory (LSTM) Model

Popular RNN variant (LSTM for short)

LSTMs are a type of Recurrent Neural Network (RNN) that solve the vanishing gradient problem, allowing them to capture long-term dependencies in sequential data. They achieve this through a memory cell and a system of gates that regulate the flow of information.

Applications Include:

- Image Generation:

- LSTMs generate images pixel by pixel or feature by feature.

- Often combined with Convolutional Neural Networks (CNNs) for spatial feature extraction.

- Handwriting Generation:

- Learn sequences of strokes and predict future strokes to generate realistic handwriting.

- Can mimic human writing styles given text input.

- Automatic Captioning for Images and Videos:

- Combines CNNs (for extracting visual features) with LSTMs (for generating captions).

- Example: "A group of people playing soccer in a field."

Key Features of LSTMs

- Memory Cell:

- Stores information for long durations, addressing the short-term memory limitations of standard RNNs.

- Gating Mechanism:

- Forget Gate: Decides which parts of the memory to discard.

- Input Gate: Determines what new information to add to the memory.

- Output Gate: Controls what part of the memory is output at the current step.

- Handles Long-Term Dependencies:

- LSTMs can maintain context over hundreds of time steps, making them suitable for tasks like text generation, speech synthesis, and video processing.

- Flexible Sequence Modeling:

- Suitable for variable-length input sequences, such as sentences, time-series data, or video frames.

Additional Notes

- Variants:

- GRUs (Gated Recurrent Units): A simplified version of LSTMs with fewer parameters, often faster to train but sometimes less effective for longer dependencies.

- Bi-directional LSTMs: Process sequences in both forward and backward directions, capturing past and future context simultaneously.

- Use Cases Beyond the Above:

- Speech Recognition: Transcribe spoken words into text.

- Music Generation: Compose music by predicting the sequence of notes.

- Anomaly Detection: Detect unusual patterns in time-series data, like network intrusion or equipment failure.

- Challenges:

- LSTMs are computationally more expensive compared to simpler RNNs.

- They require careful hyperparameter tuning (e.g., learning rate, number of layers, units per layer).

Autoencoders (unsupervised learning)

Autoencoding is a type of data compression algorithm where the compression (encoding) and decompression (decoding) functions are learned automatically from the data, typically using neural networks.

Key Features of Autoencoders

- Unsupervised Learning:

- Autoencoders are unsupervised neural network models.

- They use backpropagation, but the input itself acts as the output label during training.

- Data-Specific:

- Autoencoders are specialized for the type of data they are trained on and may not generalize well to data that is significantly different.

- Nonlinear Transformations:

- Autoencoders can learn nonlinear representations, which makes them more powerful than techniques like Principal Component Analysis (PCA) that can only handle linear transformations.

- Applications:

- Data Denoising: Removing noise from images, audio, or other data.

- Dimensionality Reduction: Reducing the number of features in data while preserving key information, often used for visualization or preprocessing.

Types of Autoencoders

- Standard Autoencoders:

- Composed of an encoder-decoder structure with a bottleneck layer for compressed representation.

- Denoising Autoencoders:

- Designed to reconstruct clean data from noisy inputs.

- Variational Autoencoders (VAEs):

- A probabilistic extension of autoencoders that can generate new data points similar to the training data.

- Sparse Autoencoders:

- Include a sparsity constraint to learn compressed representations efficiently.

Clarifications on Specific Points

- Restricted Boltzmann Machines (RBMs):

- While RBMs are related to autoencoders, they are not the same.

- RBMs are generative models used for pretraining deep networks, and they are not autoencoders.

- A more closely related concept is Deep Belief Networks (DBNs), which combine stacked RBMs.

- Fixing Imbalanced Datasets:

- While autoencoders can be used to oversample minority classes by learning the structure of the minority class and generating synthetic samples, this is not their primary application.

Applications of Autoencoders

- Data Denoising:

- Remove noise from images, audio, or other forms of data.

- Dimensionality Reduction:

- Learn compressed representations for data visualization or feature extraction.

- Estimating Missing Values:

- Reconstruct missing data by leveraging patterns in the dataset.

- Automatic Feature Extraction:

- Extract meaningful features from unstructured data like text, images, or time-series data.

- Anomaly Detection:

- Learn normal patterns in the data and identify deviations, useful for fraud detection, network intrusion, or manufacturing defect identification.

- Data Generation:

- Variational autoencoders can generate new, similar data points, such as synthetic images.